Building Wwise plugins with Pure data + Heavy (+ VS)

jcbellido June 22, 2020 [Code] #Development #Programming #Python #Wwise #PureDataWould you like to create a Wwise FX plugin and you have no audio programmer at hand? This approach could help you. This piece examines the niche Pure Data --> Wwise toolchain developed and made public by Enzien a couple years ago. It's an smart and well crafted tool that delivers what promises.

Also, go out there and find an audio programmer.

The Pure Data - Heavy - Visual Studio - Wwise chain

If you look around you'll find a number of articles that describe this approach in more o less detail:

I can confirm the process works and it's possible to port it to Python3 (see below) if you need.

Without any build and tooling support the process looks like this:

- An audio designer / tech audio guy builds a pure data patch.

- The hvcc chain parses the .pd file and generates an intermediate heavy representation.

- The tool chain wraps that intermediate representation in heavy.

- Depending on the generator you've selected (Unity, Wwise, VST, ...) a template is selected.

- Then it combines the intermediate representation and the template and generates your target.

- Using Visual Studio or XCode or whatever you compile your target.

- The resulting objects must be copied to Wwise's plugins directory.

- Open Wwise.

- Include the plugin (either source or FX) somewhere in the structure.

- Hook the Syncs.

- ... Go to 1 to iterate in the plugin if polishing is required.

This process is something a programmer is more o less used to do, albeit begrudgingly. But I would need an extremely motivated audio designer to go through this steps and not getting a riot in the process.

Enzien (see below) explored a solution where you could upload your patch to a website and it returned the compiled artifact. That reduces the friction, somewhat. And that's perhaps something you could deploy in your company. But, realistically, how often is this chain going to be used? If your goal is to generate Sources / FX for Wwise I have some trouble finding the ROI. Perhaps I'm missing something.

AudioKinetic has its own templating tools: wp

The tool chain described above makes sense when you're targeting a number of different systems. But if your goal is to cover only Wwise, Audiokinetic has their own toolset in place: Plugin tools. You can see them in action in this video:

I wonder if it'd make more sense to expand Wwise's tools directly, perhaps hooking the hvcc compiler inside it somehow. What's clear is that the Visual Studio template included in the sources is out of date and should be updated to VS2019. As by today retargeting the solution will do the trick.

Enzien Audio

The patch compiler used in this toolchain was developed by Enzien Audio. As far as I can tell the company closed a couple years ago but they uploaded parts of their tech stack to enzienaudio github. I've been mainly looking into the patch compiler hvcc, it's a smart PD / Max patch to code compiler (transpiler? something piler for sure) It's interesting to mention here that hvcc can generate outputs for unity, VST or web-audio among others.

Modernizing hvcc to Python3

Unfortunately the code in the repo is written in Python 2.7 and I'm trying to keep my codebases in Python3. Since this was my first time trying to do this I took a look around:

Python-Modernize and a bit of wiggly-waggly with encodings did the trick. But if you decide to take this route please keep in mind that the first thing I did was to reduce the scope of the tool to my precise use case: Pure Data --> Wwise plugin. Making the code to transform way smaller and easier to handle.

About Pure Data

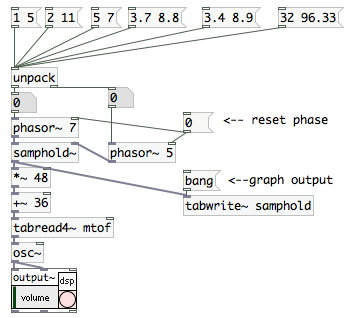

I think we all agree if I write that vanilla Pure Data evokes the worst of soviet brutalism. Jagged lines, spartan black and white, mysterious words, tildes everywhere and that distinct TCL tint. Don't panic, it's going to be all right.

At the same time is one fascinating piece of multimedia software. Probably the closest you can get to the metal if you want to use a computer and stay away from C++. The community has been there since for ever, the resources are abundant and it's extremely well documented. It's so alive that other projects like Purr Data are trying to bring the user experience to this century.

There are many tutorials freely available in YT that covers pretty much everything, synthesis, fx or video:

- Lawrence Moore has 2 full courses uploaded ~ 2016. The material is instructive but can be extremely dry.

- Really Useful Plugins has bite sized techniques. These videos are concise to a blink-and-miss-it degree. Quite fun to follow along.

- GEM video generation Because, of course, PD can generate video too.

The original Pure Data was developed by Miller Puckette and, if you're interested in the theory behind electronic music, he's kindly published his book in HTML form

In short if you're interested in learning more about this power tool the community has your back.

Conclusions

To me, if you're interested in working with audio and you're technically inclined, learning the basics of Pure Data is a reasonable investment. Regarding the workflow described here and as it is right now I can't see it working at any scale. Unless I'm missing something, it seems like a neat trick, a bit gimmicky even. It'd need a quite a lot of work to become production ready. Not to mention that most probably if you took care of exposing this mechanism to your company expecting the audio designers to use it you'll probably end building the patches yourself.

Bellido out, good hunt out there!

/jcb